ChatGPT Agents vs Claude Agents: Best AI Agent for Automation

The $20 parity between ChatGPT agents vs Claude agents collapses the moment your workflows call the API, with costs swinging up to 80%. This guide covers which agent wins each automation task in 2026 and how GPT-5.5's April launch changed the cost math. You'll leave with the routing logic and numbers to budget your automation stack.

At $20 per month, ChatGPT Plus and Claude Pro look identical on the price tag. The ChatGPT agents vs Claude agents debate is more nuanced than that: ChatGPT leads on browser-based automation and cross-app connectors, while Claude leads on coding, long-document workflows, and cost-efficient high-volume API automation where token costs can diverge by up to 80%.

That $20 parity also hides a more recent shift. OpenAI released GPT-5.5 to the API on April 24, 2026 at exactly double GPT-5.4's price, so "ChatGPT API cost" now varies by up to 100% depending on which model your workflow actually calls.

This guide unpacks three things: which agent wins each workflow type, how Custom GPTs compare to Claude Projects, and the per-task API economics that decide cost at scale. By the end, you'll know exactly which agent fits which job in your stack.

Quick Verdict — Best AI Agent by Workflow Type

Neither agent wins every workflow type. Claude dominates coding, long-document analysis, cost-efficient API automation, and persistent desktop work, while ChatGPT wins browser-based tasks, multimodal pipelines with image generation, and pre-built business connectors. The right pick follows the workflow, not the brand.

- Coding and PR automation: Claude Code with Agent Teams (parallel multi-file work, currently in research preview).

- Browser tasks, form-filling, web scraping: ChatGPT Agent Mode (virtual browser handles sessions and dynamic pages).

- Long-document analysis across files: Claude (1M-token context on Sonnet 4.6 and Opus 4.7, no long-context surcharge).

- Image-generation pipelines: ChatGPT (native DALL-E in one interface).

- High-volume API automation on a budget: Claude (Sonnet 4.6 at $3/$15; Haiku 4.5 at $1/$5 for simpler tasks).

- MCP custom integrations: Both platforms support MCP; Claude's native ecosystem runs deeper since launching the standard in 2024.

- Persistent desktop task automation: Claude Cowork (GA April 2026, multi-hour autonomous execution).

What Are ChatGPT Agents and Claude Agents?

AI agents are software systems that plan multi-step tasks, use external tools, browse the web, execute code, and interact with third-party apps autonomously, without requiring a human prompt at each step. Both ChatGPT and Claude have built full agent ecosystems on top of this capability.

OpenAI's agent stack centers on three products. Agent Mode runs browser-based automation inside ChatGPT Plus and above, handling form-filling, scraping, and booking through a virtual browser session. Custom GPTs are shareable assistants distributed through the GPT Store, with OpenAPI Actions that let them call any external API without custom code. Codex, the cloud-based coding agent, runs in secure sandboxes and integrates tightly with VS Code and GitHub.

Anthropic's lineup mirrors that breadth from a different angle. Cowork, generally available since April 2026, is a desktop agent for macOS and Windows that handles multi-hour knowledge work across files, browsers, and apps. Claude Code is the terminal-based coding agent in the same Pro subscription, with multi-agent Agent Teams currently in research preview. Computer Use, in research preview since March 2026, lets Claude control the screen directly when no connector exists.

How ChatGPT and Claude Handle Automation — Capabilities Side by Side

Both platforms have moved well past chat. Each ships a stack of agentic products built for distinct workflow types, and the differences become clearer when laid out side by side.

ChatGPT Agent Mode, Custom GPTs, and Codex

Agent Mode runs inside a sandboxed virtual browser, where Plus subscribers see roughly 40 monthly runs before hitting the cap. Business, Enterprise, and Pro tiers raise those limits significantly. Custom GPTs distribute through the GPT Store with OpenAPI-based Actions, so a single Custom GPT can call any external service that exposes a REST endpoint. Codex, OpenAI's cloud-based coding agent, runs in secure sandboxes and pairs with GitHub and VS Code. The model tier (GPT-5.4 or GPT-5.5) is selected per task. On the Business and Enterprise side, Workspace Agents bring pre-built connectors for Slack, Google Drive, SharePoint, GitHub, and Atlassian. The stack's standout strength is breadth: connector depth across mainstream business tools, plus native multimodal support spanning voice, image generation, and web browsing in one interface.

Claude Cowork, Claude Code, and Computer Use

Cowork is Anthropic's persistent desktop agent for macOS and Windows. It hit general availability across all paid plans in April 2026, starting with Pro at $20/month. The desktop app pairs with a plugin marketplace from February 2026 and native MCP connectors for Slack, Notion, Asana, Jira, Microsoft 365, and Google Workspace. Claude Code is the terminal-based coding agent in the same Pro subscription, with multi-agent Agent Teams now in research preview for parallel work across large codebases. Computer Use, also in research preview since March 2026, lets Claude control the screen when no native connector exists. Dispatch hands tasks off from phone to desktop alongside it. The defining strength here is depth: extensive coding automation, a 1M-token context window on Sonnet 4.6 and Opus 4.7 with no surcharge, and an MCP-native architecture from day one.

Claude Projects vs Custom GPTs — Which Fits Your Workflow?

The Claude Projects vs Custom GPTs decision is really about distribution and depth. Both let you build custom assistants on top of the base model, but they optimize for opposite goals: deep internal context on the Claude side, broad public reach on the OpenAI side.

| Feature | Claude Projects | Custom GPTs |

|---|---|---|

| Knowledge base scope | 200K context with automatic RAG expansion up to 10x on paid plans | Knowledge files retrieved into the GPT's context per query |

| File upload allowance | Up to 30MB per file, multiple files supported | File count and size capped per GPT |

| Sharing model | Private, or shared inside Team and Enterprise workspaces | Private, link-only, or public through the GPT Store |

| External API actions | Through MCP connectors on paid plans | OpenAPI Actions for any REST endpoint |

| Image generation | Not native; needs a third-party integration | Native through OpenAI's image model |

| Custom instructions | Yes, with Styles and Skills for brand voice consistency | Yes, with instruction text and conversation starters |

| MCP compatibility | Native (Anthropic created the standard) | Supported through Apps SDK and Developer Mode |

| Team collaboration | Built-in on Team and Enterprise plans | Workspace controls on Business and Enterprise |

The split is intuitive once the goal is clear. Build a Claude Project for a deeply contextualized internal assistant: a research workspace, internal docs companion, or brand-voice writing tool that stays within your team. Build a Custom GPT when public distribution, external API integrations, or native image generation are the requirements.

Both ecosystems now support MCP, so developer-built workflows can sit on top of either without locking into one stack. That convergence fits the broader shift seen across our Claude vs ChatGPT 2026: Best AI for Content, Code & Research comparison.

The API Cost Reality Behind the $20/Month Comparison

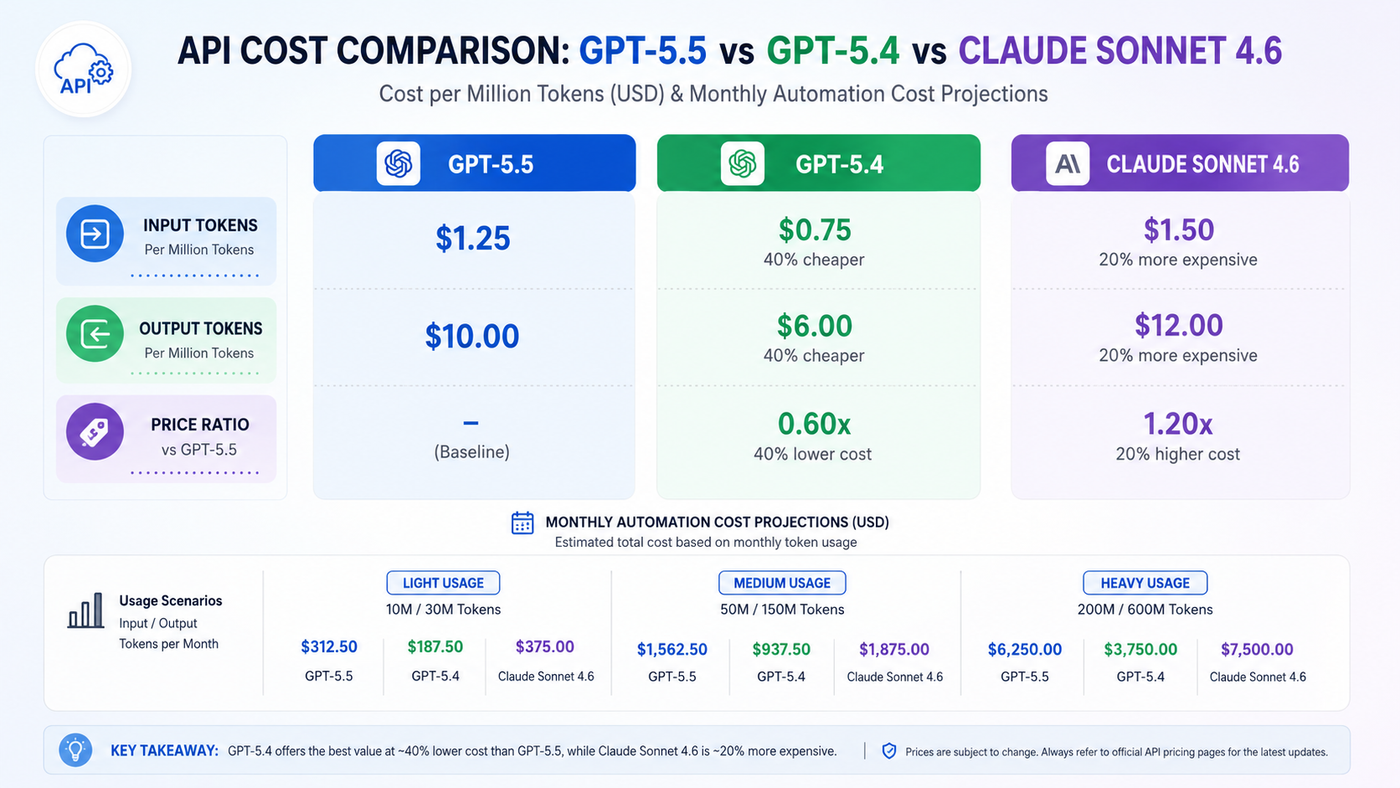

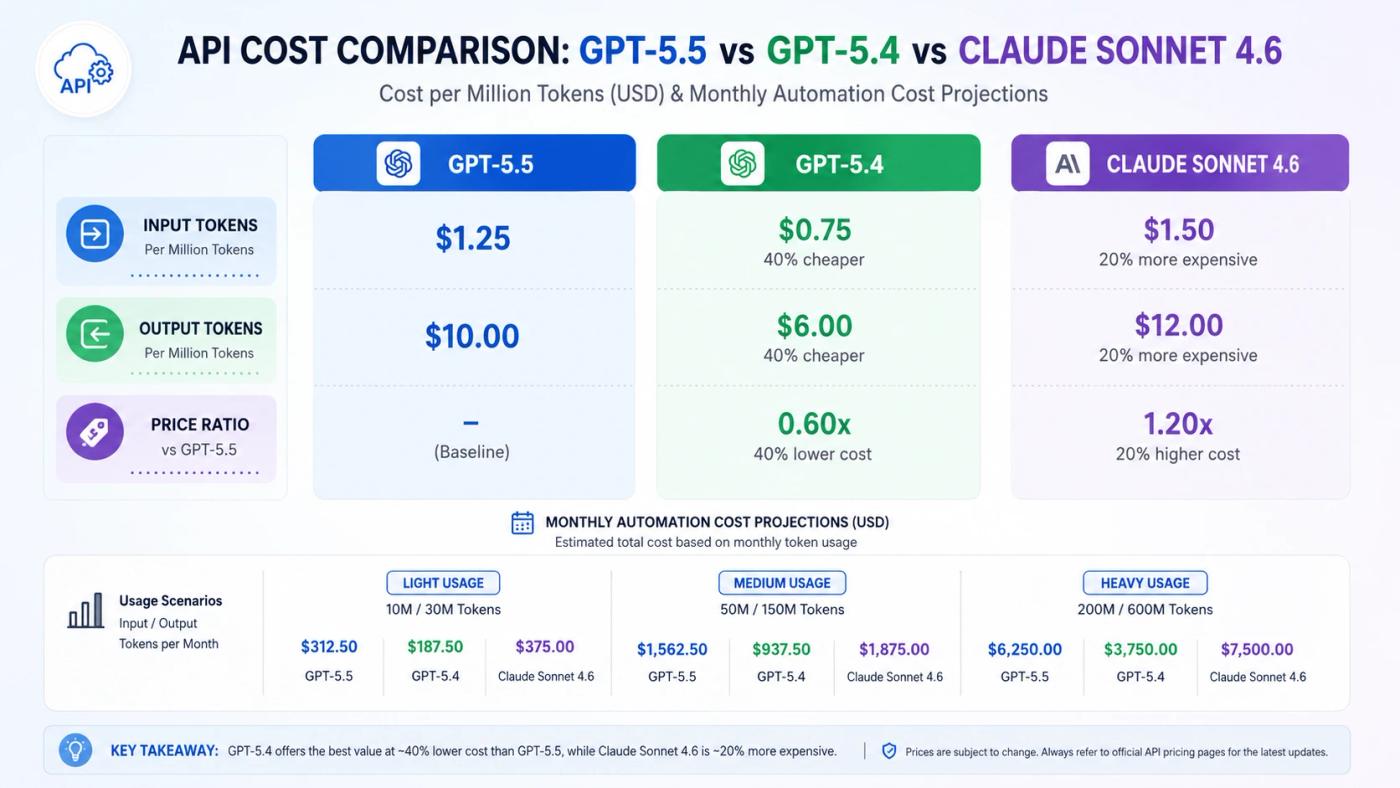

Both platforms charge $20/month at the subscription tier, but automations that call the API directly face a three-tier cost structure. At comparable quality, Claude Sonnet 4.6 runs roughly 45% cheaper per task than GPT-5.5, though GPT-5.4 is still available at nearly the same price as Sonnet.

GPT-5.5 hit the API on April 24, 2026 at $5 per million input tokens and $30 per million output tokens, exactly double GPT-5.4's $2.50/$15 rate. Many cost models built before that launch don't reflect the increase, so a workflow expecting $30/month for API tokens could now be running closer to $60.

Consider a customer-support automation running 1,000 monthly tasks at 6K input and 1K output tokens each. Claude Sonnet 4.6 at $3/$15 lands at $33/month. GPT-5.5 at $5/$30 lands at $60/month, about 1.8x more expensive. GPT-5.4 at $2.50/$15 actually edges out Sonnet at $30/month, since its input rate is lower. The cost gap to Claude only opens up against GPT-5.5. Teams still routing to GPT-5.4 sit roughly at parity with Sonnet.

📌 Pro Insight: The Claude cost advantage only holds against GPT-5.5. Teams still routing to GPT-5.4 sit within $3/month of Sonnet 4.6 at the same volume, which makes model tier selection within each platform a bigger lever than vendor choice.

Here's what most teams miss. The biggest cost levers aren't vendor selection at the same tier; they're the Batch API (50% off on both platforms for non-time-sensitive jobs) and Anthropic's prompt caching (up to 90% off repeated input tokens). For high-volume classification or routing, Claude Haiku 4.5 at $1/$5 is the cheapest current Claude option.

How n8n Changes the Cost Math for Automation Teams

For teams orchestrating agents at scale, n8n shifts the cost equation. Both Claude and ChatGPT plug into n8n through the LangChain-based AI Agent node, and a single agent run counts as one execution regardless of how many internal nodes it triggers. The bigger lever is hosting: running n8n self-hosted via Docker eliminates the platform fee entirely, leaving only API token costs to budget for. Pair self-hosted n8n with Sonnet 4.6 and prompt caching enabled, and you land on one of the most cost-efficient production architectures available for high-volume agentic workflows in 2026.

Real-World Automation Scenarios — Which Agent Wins Each Task?

Routing by task type is what separates teams running smooth agent stacks from teams running expensive ones. These five scenarios show where each platform genuinely earns the work.

Scenario 1 — Automated code review and PR summaries: Claude Code with Agent Teams. Blind code-quality reviews from a 500+ developer community survey in early 2026 rated Claude Code's output cleaner 67% of the time, citing fewer hallucinated API calls and stronger multi-file codebase navigation.

Scenario 2 — Lead enrichment from LinkedIn with CRM update: ChatGPT Agent Mode. The virtual browser plus pre-built Workspace connectors for Salesforce, HubSpot, and Google Workspace cover this end-to-end. Claude's Computer Use remains in research preview and lacks the connector depth for production-grade lead pipelines.

Scenario 3 — Weekly financial reports from multiple spreadsheets: Claude Cowork with Excel and PowerPoint Skills. The 1M-token context handles cross-document analysis in a single session, so consolidated reports don't need manual stitching.

Scenario 4 — Customer support ticket triage and response drafting: Cost-driven. Sonnet 4.6 wins on API economics at scale. GPT-5.5 wins only if the workflow needs live web browsing mid-task to pull current order or policy data.

Scenario 5 — Multi-tool orchestration across custom services: MCP is now the common standard on both platforms. Claude's native MCP ecosystem has a head start, but ChatGPT adopted MCP in March 2025, so both platforms now consume the same servers.

For a broader picture of how these task-type decisions roll up into a full stack, our AI Automation for Business (2026): Costs, Benefits & Use Cases guide walks through the architecture decisions in detail.

Six Mistakes That Lead Teams to the Wrong AI Agent

Most of the wrong-fit decisions come from a handful of repeatable errors. These are the six that cost teams the most in 2026.

- Comparing subscription prices instead of per-task API costs. The $20/$20 framing collapses the moment your workflow calls the API directly, where per-token costs determine the monthly bill, not the seat license.

- Assuming "ChatGPT API" always means GPT-5.5. GPT-5.4 is still live at half the output cost and performs comparably on most automation tasks, so workflows that haven't pinned a model version may have silently doubled their token spend.

- Defaulting to the flagship model for every task. Routing simple classification to Haiku 4.5 instead of Sonnet 4.6 cuts costs by roughly 67% with no quality loss on routing or tagging workloads.

- Ignoring MCP compatibility when evaluating connector lock-in. Both platforms now support MCP, so proprietary connector counts matter far less than they did in 2025; the right question is which servers your stack actually needs.

- Treating context window size as a tiebreaker. Both platforms support 1M tokens. The real differentiator is whether long-context pricing applies a surcharge: Sonnet 4.6 and Opus 4.7 have none, while OpenAI's long-context modes carry a 2x input premium past 272K tokens.

- Building on one platform for every workflow type. Teams that automate at scale route by task: Claude for coding and document work, ChatGPT for browser-based and multimodal tasks. Picking a single vendor leaves performance and cost on the table.

ChatGPT Agents vs Claude Agents — Frequently Asked Questions

Is Claude better than ChatGPT for automation?

Neither is universally better. Claude leads for coding agents, long-document workflows, and cost-efficient API automation at scale. ChatGPT leads for browser-based tasks, multimodal workflows involving image generation, and consumer-facing app integrations. The right choice follows the task type, not the platform brand.

What is MCP and why does it matter for AI agents?

Model Context Protocol is an open integration standard introduced by Anthropic in late 2024 and since adopted by OpenAI, Google DeepMind, and Microsoft. It lets any AI agent connect to external tools through a universal protocol instead of platform-specific connectors, which significantly reduces vendor lock-in across automation stacks.

Can ChatGPT and Claude agents be used together?

Yes. Many teams route tasks by strength: Claude Code for development automation, ChatGPT Agent Mode for browser-based and multimodal workflows. MCP compatibility on both platforms makes hybrid stacks practical without duplicating integration work across vendors.

Is ChatGPT Plus or Claude Pro better value at $20/month?

Both offer strong value at the same price. Claude Pro includes Claude Code and Cowork access for coding and desktop automation. ChatGPT Plus includes Agent Mode for browser automation and Advanced Voice Mode for multimodal use. The better-value answer depends on whether the primary workload is coding and document analysis or web-based and multimodal tasks.

Why did ChatGPT API costs increase in 2026?

OpenAI released GPT-5.5 on April 24, 2026 at $5/$30 per million tokens, double GPT-5.4's $2.50/$15 rate. GPT-5.4 remains available for teams where the cost increase isn't justified by the quality gain. Workflows that haven't pinned a model version may have silently migrated to the more expensive tier. Teams looking to offset costs can also check our How to Get Free Claude AI API Credits in 2026 guide for current paths.

Final Verdict — Matching the Right AI Agent to Your Automation Stack

The ChatGPT agents vs Claude agents decision has no single winner. Claude wins coding automation, long-context document analysis, and cost-efficient high-volume API workflows running on Sonnet 4.6 or Haiku 4.5. ChatGPT wins browser-based tasks, multimodal pipelines, and the broadest pre-built connector library. The real lever isn't Claude vs ChatGPT at all, though. It's which model tier inside each platform actually fits the task, since GPT-5.4 and Sonnet 4.6 sit nearly identical in price while GPT-5.5 costs up to 80% more for comparable output. With MCP adoption converging the integration layer, the differentiator in 2026 is model-to-task fit, not connector count. To put this routing into a deployable architecture, see our n8n AI Automation Guide 2026: Real Cost, Use Cases & Benefits.